✍️ Scott Walldren is the Head of SEO at Acadia.

Most marketing teams focus on creating content. The bigger issue is whether existing signals actually align.

-

Your content strategy is solid. Technical SEO is dialed in. Brand positioning is clear. But when prospects research your company through AI tools, the descriptions that come back are vague, generic, or don't match your current positioning at all.

This isn't a ranking problem. It's a clarity problem.

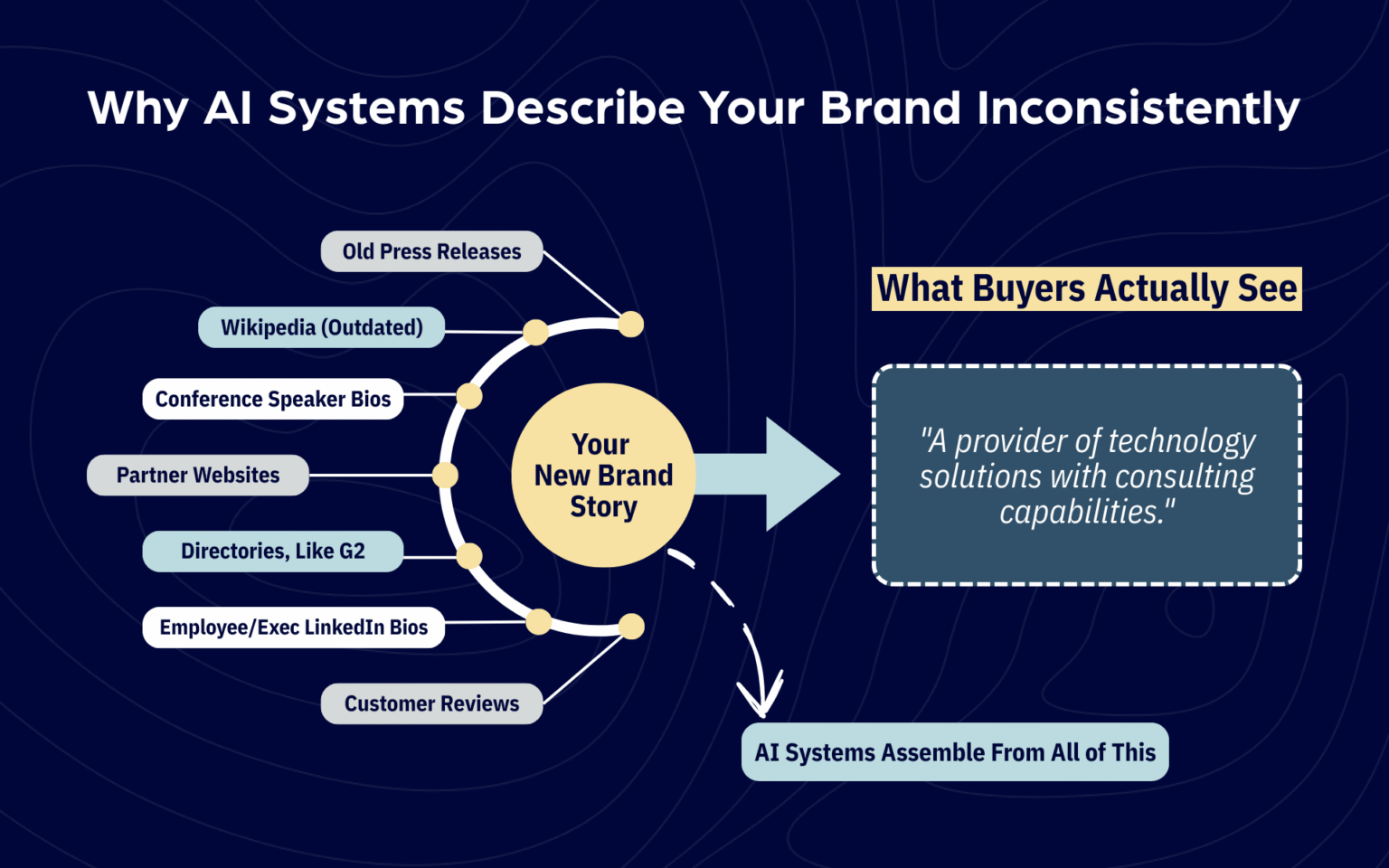

AI systems (whether that's ChatGPT, Perplexity, Google's AI Overviews, or any of the dozen other tools buyers now use to research vendors) assemble their understanding of your brand from multiple sources across the web. When those sources tell different stories, the systems hedge. They produce generic descriptions, revert to older positioning that was more frequently repeated, or simply fail to classify you confidently in the categories where you actually compete.

Most marketing teams optimize forward: new content, new campaigns, new positioning. What they don't do is audit whether the signals that already exist across the web actually align with each other.

The result? Legacy press releases describe an older product set. Partner websites use outdated positioning. Executive bios on LinkedIn emphasize different value propositions than the company website. Customer reviews and testimonials reference capabilities you've moved beyond.

Each of these individually seems minor. Collectively, they create exactly the kind of signal confusion that AI systems surface immediately, often before a prospect ever talks to your sales team.

You can't optimize your way out of a consistency problem. You have to audit your way into one.

Where Marketing Teams Lose Control of Their Own Narrative

Here's what this looks like in practice, across three common scenarios that show up regardless of industry:

Legacy Descriptions Persist Longer Than You Think

A B2B SaaS company evolved from point solution to platform eighteen months ago. The website reflects the platform positioning. Marketing materials were updated. Sales got trained on the new messaging.

But:

- Press releases from two years ago still describe the narrower original product.

- Directory profiles on G2, Capterra, and industry-specific sites haven't been touched.

- Partner co-marketing pages still use the old language.

When AI systems scan for information about the company, they see far more repetition of the old positioning than the new one, and they weigh accordingly. Six months after the repositioning, automated summaries still describe the company as a point solution. The platform story barely registers.

Different Teams Describe The Company Differently

A healthcare technology company's marketing site emphasizes integrated patient experience and clinical workflow efficiency. But look across other surfaces:

- Product documentation focuses on technical specifications and compliance certifications.

- Sales decks lead with cost savings and ROI.

- Executive LinkedIn bios highlight different aspects of the value proposition depending on who wrote them.

- Customer success materials use entirely different language to describe the same capabilities.

When AI systems try to synthesize a description, they see conflicting signals and produce something cautiously generic. Instead of confident positioning, prospects get hedge language: "offers various healthcare solutions" or "provides services for medical organizations."

Third-party Descriptions Haven't Kept Pace With Your Evolution

A mid-market retail brand expanded from a single product category into adjacent categories over the past two years. The business clearly moved beyond its original focus. But external signals haven't caught up:

- Customer reviews, case studies, and testimonials still overwhelmingly reference the original category.

- Media coverage and industry analyst mentions reflect the old positioning.

- Partners and resellers describe the company based on what they knew when the relationship started.

AI systems treat these third-party sources as high-signal corroboration, often weighting external validation more heavily than first-party claims. The result: automated comparisons and recommendations continue slotting the brand into its original, narrower category.

The performance impact shows up in recognizable patterns:

- Prospects arrive at sales conversations with an outdated or confused understanding of what you actually do.

- You appear in the wrong competitive comparison sets.

- AI-generated category recommendations leave you out entirely because the systems can't confidently classify where you belong.

- Questions that should have been answered during initial research come up repeatedly in discovery calls.

AI discovery doesn't reward the best content. It rewards the most consistent signal.

Why Good Marketing Teams Still Have This Problem

Marketing operates in campaign cycles and launch windows. Rebrand the company, update the website, create fresh content, train the sales team, and move to the next priority. The implicit assumption is that new signals automatically replace old ones over time. They don't.

Old information persists because it exists in places marketing doesn't directly control and often doesn't even track:

- Press coverage creates permanent records that get indexed and referenced for years.

- Partner websites and co-marketing materials require manual coordination to update.

- Directory profiles and review sites get set up once and are rarely revisited.

- Third-party discussions in forums, on Reddit, and in analyst reports continue using whatever language those communities adopted when they first encountered your brand.

Meanwhile, different internal teams develop their own versions of brand language over time. Product marketing emphasizes technical differentiation. Sales focuses on business outcomes. Customer success speaks to implementation and support. Recruiting materials highlight culture and mission. None of this is wrong individually, but collectively it creates exactly the kind of signal fragmentation that AI systems surface.

Recent research from SparkToro analyzing thousands of AI tool responses found that AI tools rarely give the same list of brand recommendations twice, even when asked identical questions. As researcher Rand Fishkin concluded: "AIs are probability engines: they're designed to generate unique answers every time. Thinking of them as sources of truth or consistency is provably nonsensical."

This randomness in AI outputs makes input consistency even more critical. As I wrote when Apple and Google announced their AI partnership, the fundamentals that made brands discoverable in traditional search still matter in AI discovery—they just matter more. Clear positioning, consistent signals, and authoritative validation aren't new requirements. They're existing requirements that now carry higher stakes.

This means the only lever brands have is ensuring the signals these systems pull from are themselves consistent. When input signals conflict, output descriptions become unreliable. Not because the AI is wrong, but because there's no single coherent story to tell.

The AI discovery layer exposes inconsistencies that existed before, but surfaces them faster. A confused prospect might have shown up in a sales conversation six months ago. Now they show up already confused in the automated research phase, and you never get the meeting at all.

This isn't an AI problem. It's an organizational alignment problem that AI makes visible earlier in the buyer journey.

The Signal Consistency Audit

Most marketing teams don't need more content. They need to align what already exists.

This audit doesn't require new tools or specialized platforms. It requires a systematic review of where your brand appears across the web and whether the language actually matches. Think of this as forensic work, not creative work.

First-Party Signal Alignment

Start with surfaces you directly control. Compare your primary website positioning against About pages on secondary properties (product sites, regional sites, legacy domains). Review executive bios across LinkedIn, your company site, and speaker profiles for industry events. Pull boilerplate language from press releases, media kits, and investor materials from the past 24 months. Audit product and service descriptions across different sections of your main site.

The question you're answering: Do we describe ourselves the same way everywhere we control the message?

What you're looking for:

- Places where language drifted over time

- Where different teams developed their own descriptions

- Where positioning evolved, but older properties never got updated

This is rarely malicious or careless. It's structural. Marketing updates the main site, but no one thinks to update the careers page, the partner portal, or the regional microsites.

Third-Party Signal Inventory

Map where third parties describe your brand and how current those descriptions are:

- Partner websites and co-marketing materials

- Directory and review site profiles (G2, Capterra, industry-specific platforms)

- Media coverage and analyst mentions from the past 18-24 months

- Customer language in reviews, testimonials, and case studies

- Community discussions on Reddit, industry forums, or professional networks

The question you're answering: How are others describing us, and does it match our current positioning?

What you're looking for is lag. Partners still use language from when the relationship started. Review sites with descriptions written years ago that no longer reflect your product set. Media coverage that accurately described you at the time, but hasn't been updated as you've evolved. Customer testimonials that reference old capabilities or positioning.

You don't control these surfaces directly, but you often have more influence than you think. Partners will update co-marketing materials if you provide them with the current language. Directory profiles can be claimed and updated. Media contacts will use whatever boilerplate you send them. The issue isn't access. It's that no one systematically audits and addresses these surfaces.

Cross-Functional Language Consistency

Review how different internal teams describe the company in their materials:

- Sales deck positioning versus marketing site messaging

- Product documentation versus brand guidelines

- Customer success communications versus company positioning

- Recruiting materials and employer brand language versus commercial messaging

The question you're answering: Are different teams using the same brand language, or has each developed its own version?

What you're looking for is the drift between how customer-facing teams speak about the company. Sales might emphasize ROI and business outcomes. Marketing leads with innovation and differentiation. Product focuses on technical capabilities. Customer success talks about implementation and support. Each makes sense in context, but when AI systems try to synthesize across all these signals, they produce something muddled.

This audit doesn't require sophisticated tracking platforms or AI monitoring tools. It requires opening documents, visiting websites, and comparing language side by side. The work is tedious, not complex. But it's also the only way to see where your brand story fragments across the web.

Building Systematic Signal Consistency

Signal alignment isn't a one-time project. It's an operational practice that needs to happen whenever positioning changes, product lines expand, or strategic direction shifts. But getting to baseline consistency is straightforward work that most marketing teams can accomplish in 60-90 days.

Establish Canonical Brand Language

This isn't about crafting a perfect tagline or clever positioning statement. It's about creating a clear, repeatable description of what the company is, who it serves, and what problems it solves. Language that every team can use verbatim without adaptation.

The test: Can leadership, marketing, sales, and product all use this exact language in their materials without feeling like it doesn't fit their audience or context? If the answer is no, the language isn't canonical yet. It's still positioning language that needs interpretation.

This description should be boring and functional:

- Not creative

- Not clever

- Just clear enough that when it gets repeated across dozens of surfaces, AI systems understand exactly what category you belong in and what you actually do.

Update Owned Properties Systematically

Once canonical language exists, systematic updates across first-party properties is straightforward execution:

- About pages

- Executive bios

- Boilerplate language in press materials

- Product descriptions.

This isn't creative work or messaging refinement, it's implementation. Copy the canonical language and replace whatever's currently there. The goal is repetition, not innovation. When AI systems see the same description across your website, your executives' LinkedIn profiles, your press boilerplates, and your directory listings, they gain confidence in that description's accuracy.

This work also surfaces properties you forgot existed:

- The careers page that hasn't been updated in three years

- The partner portal with outdated positioning

- The regional microsite using language from a previous brand iteration.

Bringing these into alignment prevents AI systems from finding conflicting signals when they scan your owned properties.

Address High-signal Third-party Sources

Not all third-party mentions carry equal weight in AI systems' understanding. Priority order:

- Partner profiles on major platforms matter more than a mention in a niche blog post

- Directory listings on G2 or Capterra matter more than forum comments

- Recent media coverage matters more than years-old press.

Focus on the surfaces that AI systems treat as authoritative sources:

- Update partner co-marketing materials by providing current boilerplates

- Claim and refresh directory profiles that haven't been touched in years

- Send updated media kits to journalists and analysts who cover your industry

- Request testimonial updates from customers whose language reflects old positioning.

You won't eliminate all third-party lag, and you shouldn't try. The goal isn't control of every mention. It's ensuring the highest-signal third-party sources reinforce rather than contradict your first-party positioning.

Align Cross-functional Teams On Language Discipline

Sales enablement, product marketing, customer success, recruiting: every team that describes the company externally needs to use the same core language. Not because of brand police enforcement, but because inconsistency creates exactly the ambiguity AI systems surface to prospects.

This requires ongoing coordination, not just initial alignment:

- When positioning evolves, all teams update simultaneously

- When new products launch, everyone uses the same language to describe them

- When customer-facing materials get created, they draw from canonical descriptions rather than each team inventing its own version

The organizational challenge here is harder than the execution challenge. It requires discipline not to reinvent language every time a new deck gets created or a new campaign launches. But that discipline is what prevents signal fragmentation from recurring six months after you've just cleaned it up.

Timeline expectations: The initial audit and core updates take 60-90 days, depending on how many properties exist and how fragmented the current language is. Then expect another 3-6 months for AI systems to fully reflect the aligned signals in their responses. Not instant, but systematic.

This work doesn't expand marketing's scope or require new services. It focuses existing effort on the signals that actually matter for how prospects encounter and understand your brand during research.

The Competitive Advantage

Most brands are still optimizing for volume:

- More content

- More campaigns

- More channels

- More features to announce

What they're missing is that none of that volume matters if the signals that already exist don't align.

The brands that audit and systematically align their signals first, whether working independently or with a digital marketing firm that specializes in brand discoverability, will own clearer, more confident positioning in AI discovery. Not because they gamed the systems or found some optimization trick, but because they made themselves genuinely easier to understand.

This isn't about manipulating AI tools. It's about making sure the brand you've built is actually understood the way you intended, by any system (human or automated) that tries to figure out what you do and whether you're relevant.

The marketing reality is straightforward: AI discovery is making visible what was always true. Clarity compounds. Ambiguity undermines. Brands that are easy to understand correctly have always had an advantage in the market. That advantage just matters more now, because the systems mediating discovery surface confusion faster than human prospects used to.

The question isn't whether to optimize for AI discovery. It's whether your existing signals are clear enough for any system (human or automated) to understand what you actually do.

If you're seeing signs that AI-generated descriptions of your brand don't match your positioning, or you're curious what a signal consistency audit would reveal, we're happy to talk through what that process looks like. Reach out to our team.

Read more from this author: